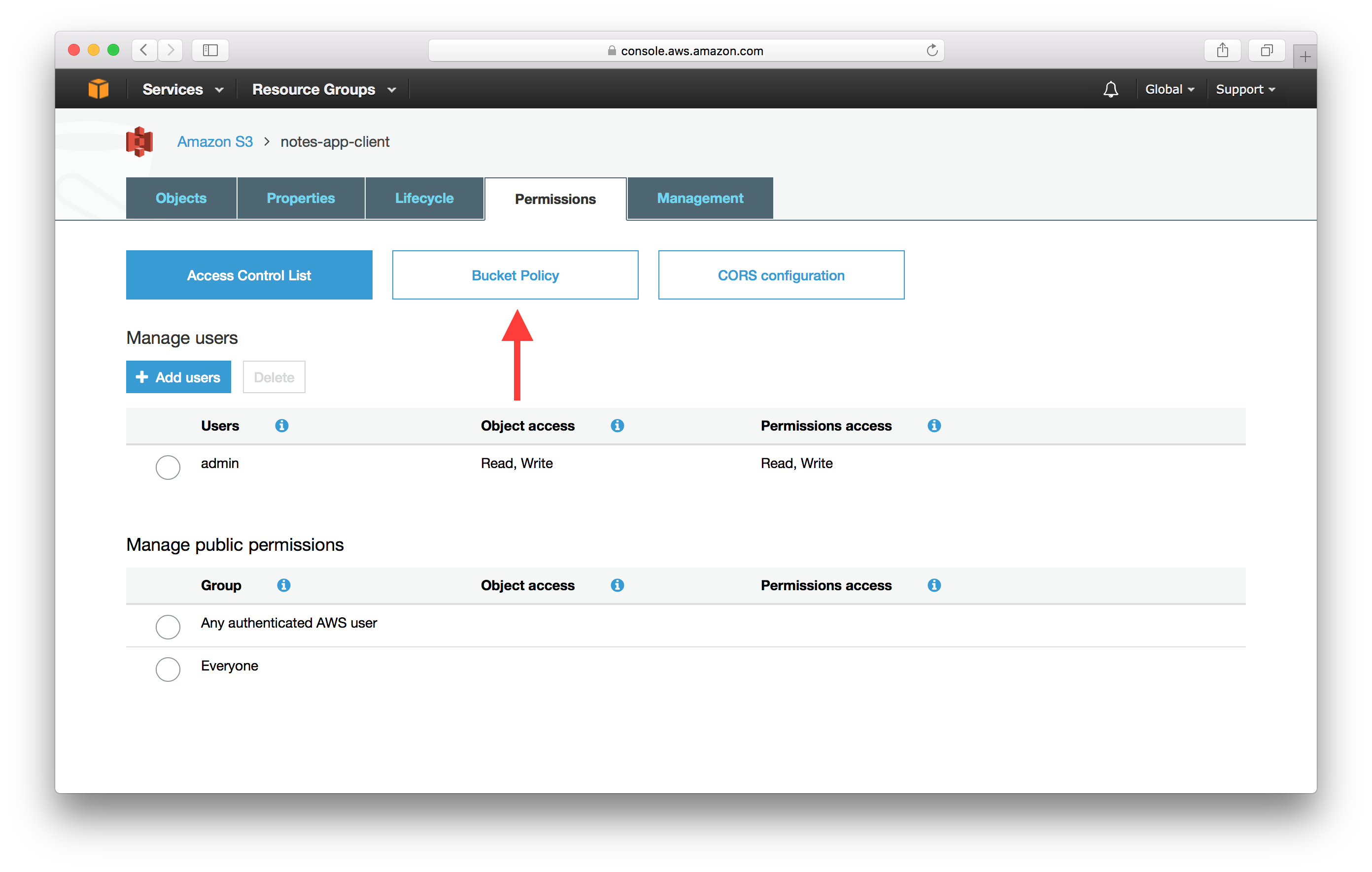

So the owner of the destination account(whose ID is mentioned in the policy) will now have proper permissions.īut the destination account holder/owner still needs to create another user for copying files and delegate the permission it received from source account to that new user. We actually delegated permissions to do s3 operations on our examplebucket to another AWS account ID. You can find the destination account ID, by navigating to myaccount option in the top right drop down (in the destination account). And also change examplebucket to your source bucket name. Remember to change 111111111111 to relevant account ID of the destination AWS account. You can paste the above content inside the policy editor. "Resource": "arn:aws:s3:::examplebucket/*"īucket policy can be accessed by going to AWS S3 -> Select your Bucket (in the above case its examplebucket) -> Permissions -> Policy. We will be giving/delegating access to the destination account number in this policy. Every AWS Account has an account ID number.

This step will actually delegate the required permission to the other aws account(destination account). Step 2: We need to attach a bucket permission policy to the bucket created in previous step. Else go ahead and create an s3 bucket by going into aws s3 web console. Step 1: Here we need an s3 bucket(if it already exists, well and good). Things to do on Account 1(Am considering it as Source Account from where files will be copied): Basically we will need one single user who has proper permissions on both the buckets for this copy operation. Well you always need this key pair to do anything on an s3 bucket programmatically. Hence am writing this, so that this can be helpful to others struggling to find a solution for this.īasically we are going to use one access key and one secret key to carry out this operation. Although it appeared to be quite simple and straightforward at first, I could not nail it out, simply due to the plethora of articles available in the internet with different permission sets on this subject. But this will only work if you have proper permissions. You can basically take a file from one s3 bucket and copy it to another in another account by directly interacting with s3 API. Recently i had a requirement where files needed to be copied from one s3 bucket to another s3 bucket in another aws account. If a user in an aws account has proper permissions to upload and download stuff from multiple buckets in that account, you can pretty much copy files between buckets as well(well buckets in the same account). It also offloads the responsibility of serving files from the application to the AWS S3 API. S3 takes care of scaling the backend storage as per your requirement, and your application needs to be programmed to interact with it. Which means, if somebody in the world has a bucket named mybucket, you cannot have a bucket with that name. Always remember the fact that the name space for bucket name is common in the whole of s3 across all accounts. You can use aws cli, or other command line tools to interact/store/retrieve files from a bucket of your interest. You can have as many buckets as you want. You need an Access Key and Secret Key pair to do operations on an s3 bucket.īucket is nothing but a construct to uniquely identify a place in s3 for you to store and retrieve files.

It's also highly secure, and access is only granted on a policy based method for aws user accounts(also called IAM accounts). Usually applications can directly interact with S3 API to store and retrieve files. You can use s3 for storing media files, backups, text files, and pretty much everything other than something like a database storage. It has a good reputation of infinite scalability and uptime. Let’s focus on retrieving the uploaded image Retrieving the Uploaded Image from S3įor this first we have to create a new Get Method in API Gateway.Īs we did before for the POST Call, we can create a new resource and create a new method directing to a new lambda function with the same IAM Role as shown in fig 24.Simple Storage Service(s3) offering from AWS is pretty solid when it comes to file storage and retrieval. Now the work is done to upload an image to S3. const AWS = require('aws-sdk') //*/ get reference to S3 client var s3 = new AWS.S3() exports.handler = (event, context, callback) => Since the request body is a Base64 string, we have to decode it back and upload to S3.įollowing is my script for uploading the image to S3 bucket. I use the query param to name the image when uploading to S3. In lambda what I do is, I extract this query param and the request body. In my API Call, I send a query param as username and a request body with an image as a Base64 encoded String. Lambda Script for Uploading an Image to S3 Now as the configuration work is done, we can move on to get your hands dirty with Lambda. I know it’s quite hectic to go through all these configurations.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed